Special acknowledgement to Michael Schuckers, St. Lawrence University, for advice regarding statistics for biometric evaluation.

1. Revision History

Line-by-line comparisons for Pull Requests can be viewed in the GitHub Repository by viewing the Pull Requests and selecting the "Closed" Pull Requests, or by adding the PR number to the end of the URL, for example, https://github.com/fido-alliance/biometrics-requirements/pull/9.

| Date | Pull Request | Document version | Description |

|---|---|---|---|

| 0.1 | Initial Draft | ||

| 2017-01-05 | #9 | 0.2 | Population Section Edits |

| 2017-02-02 | #14, #17, #18, #19, #21, #23, #24, #34 | 0.3 | Bootstrapping, ISO Terms, Number of Subjects, Test Visits, Genuine Verification Transaction, removed Test Environment, Report to Vendor, and editorial issues. |

| 2017-03-02 | #36 | 0.5 | Minor editorial corrections from Feb 16 and March 2 calls. |

| 2017-03-23 | #38 | 0.6 | Introduction to Bootstrapping and other editorial issues. |

| 2017-03-29 | #42 | 0.7 | New Key Words, and Self-Attestation FAR Requirement (Optional), and Self-Attestation sections. Rule of 3 and Bootstrapping sections split into FAR and FRR. |

| 2017-04-11 | #44 | 0.8 | Minor editorial corrections from March 30 call. |

| 2017-04-14 | #45 | 0.9 |

New Revision History. Expanded RFC 2119 Key Word explanation. New Target of Evaluation section. Reworded False Reject Rate, and False Accept Rate sections. Edits to Number of Subjects, Population, and Bootstrapping: FAR. New Biometric Reference Adaptation and Enrollment sections. Added inline issues based on comments from Jonas Andersson.

FRR requirement at 3%. |

| 2017-04-27 | #46 | 0.10 | Removed "Active Impostor Attempt", replaced with "Zero-Effort Impostor Attempt". Added "Arithmetic Mean". Removed Personnel Terms that were not used in the document. In Bootstrapping: FAR section replaced confidence interval with FAR distribution curve. Changed SHOULD to SHALL in Test Reports section. For Genuine Verification Transactions, added "Test Subjects SHALL conduct 5 genuine verification transactions." Added inline issues. |

| 2017-05-09 | #47 | 0.11 | Added notes about attempts. |

| 2017-05-24 | #48 | 0.12 | Added Test Procedures for Presentation Attack Detection (PAD). |

| 2017-06-08 | #52 | 0.13 | Clean up of Test Reports sections. Added editors. |

| 2017-06-27 | #55, #56 | 0.14 | Added KaTeX formatting for the FRR and FAR formulas. |

| 2017-08-03 | #57 | 0.15 | Additional PAD Requirements - Triage of Presentation Attacks. |

| 2017-08-03 | #53 | 0.16 | Added Rate Limit Requirement and mapping to Authenticator Security Requirement 3.9. |

| 2017-08-03 | #54 | 0.17 | Confidence Interval at 80%, Bootstrapping FAR Figure, Minimum number of subjects at 245, and minimum of 123 unique persons in the test crew. |

| 2017-08-03 | #58, #59, #60, #61 | 0.18 | Editorial corrections. |

| 2017-08-03 | #63 | 0.19 | Further updates for 80% confidence interval, failure to acquire will not be considered during off-line FAR testing. |

| 2017-08-31 | #64, #65 | 0.20 | Offline testing of FAR updated from N(N-1)/2 to (N(N-a)/2, 4 fingers instead of two. Corrected usage of MUST and SHOULD to SHALL. Added details to Self-Attestation FAR, and Bootstrapping: FAR sections. Updated Report to Vendor to Report to FIDO, and added information that should NOT be included. Populated PAI Species for Fingerprint, and for Face sections. |

| 2017-09-27 | #77 | 0.21 | Added additional rows to the Self-Attestation Number of Subjects table. Updated number of subjects for PAD from 4 to 10. Added text to PAI Species for Iris/Eye Section. Updates to the Impostor Presentation Attack Transactions and Impostor Presentation Attack Errors sections. |

| 2017-10-12 | #76, #75, #78 | 0.22 | #76: Updates related to PAI species for IAPAR. Added biometric characteristic data as a requirement for the FIDO Reports. Added a requirement for Labs to get approval for the PAI species for modalities not covered in this requirements document prior to completing an evaluation. Other editorial corrections around transactions vs. attempts. #75: Added Rule of 3 Table to Rule of 3: FAR Section. #78: Clarifications to the FAR calculation. Rate limiting number of attempts shall be limited to 5. Removed the Pre-Verification section. Clarifications for stored verification transactions. |

| 2017-10-26 | #80 | 0.23 | Added Self-Attestation for FRR (Optional) section. |

| 2017-12-07 | #84, #85 | 0.24 | Added PAI for Voice Section, clean up of open issues. |

| 2017-12-22 | #87 | 0.25 | PAI Species for Voice edits |

| 2018-1-18 | #98 | 0.26 | Multiple edits, most editorial. Added requirement to PAD < 50% for all PAI species tested in addition to <20% for 5/6 Level A and 3/4 Level B. |

| 2018-1-18 | #100 | 0.27 | Multiple edits, most editorial. Added requirement for multiple templates. |

| 2019-3-10 | 0.3 | Minor change to make bootstrapping FAR more clear. | |

| 2019-05-30 | 1.0 | Editorial upgrade of version number for publication | |

| 2019-06-06 | 133, 134, 135,126 | 1.1 | Adressing issues 133, 134, 135, 126 |

| 2019-08-15 | 141 | 1.2 | Adressing issues 141 |

| 2020-08-26 | 148 to 194 | 2.0 | Edits to definitions for transactions and attempts, Change FRR to 5%, Change in PAD requirements to 7%, edits to PA levels, Edits to test environment |

| 2021-9-30 | 207, 212 | 2.1 | Added levels that map to using 15% (Level 1) and 7% (Level 2) thresholds for IAPAR for PAD |

| 2021-9-30 | 220 | 2.2 | Changed PAD Enrollment Subjects from 25 to 15 and PAI Level B Species from 6 to 8 |

| 2022-1-6 | 228 | 2.2.1 | Editorial Changes due to laboratory feedback |

| 2022-3-3 | 226 | 2.3 | Multimodal authenticators |

| 2022-3-3 | 242 | 3.0 | Replaced Level 1 with new framework of BioLevels, updated definitions to align with ISO |

2. Introduction

This document provides implementation requirements for Vendors and Test Procedures which FIDO Accredited Biometric Laboratories can use for evaluating the biometric component of a FIDO Authenticator. The biometric component of the authenticator can be certified either as a component of the authenticator or as a separate biometric subsytem where the biometric certification can be used as input to a FIDO authenticator certification which includes the biometric subsystem. The test will focus on the passing requirements for biometric performance for the following metrics.

The output of this test is provided to the FIDO certification program and will be used as a component to FIDO Certified products. The data will also be incorporated in the FIDO Metadata Service (MDS).

Associated documents to this document include: FIDO Biometrics Laboratory Accreditation Policy FIDO Biometrics Certification Policy

Biometrics requirements SHALL be reviewed periodically to assess its appropriateness.

2.1. Reference Documents

The following ISO standards are normative references to this certification program: ISO/IEC 19795-1:2021 Information technology — Biometric performance testing and reporting — Part 1: Principles and framework [ISOIEC-19795-1]

ISO/IEC 19795-2:2007 Information technology -- Biometric performance testing and reporting -- Part 2: Testing methodologies for technology and scenario evaluation [ISOIEC-19795-2]

ISO/IEC 19795-5:2011 Information technology -- Biometric performance testing and reporting -- Part 5: Access control scenario and grading scheme. [ISOIEC-19795-5]

ISO/IEC TS 19795-9:2019 Information technology — Biometric performance testing and reporting — Part 9: Testing on mobile devices [ISOIEC-19795-9]

ISO/IEC 30107-1:2016 Information technology -- Biometric presentation attack detection -- Part 1: Framework [ISOIEC30107-1]

ISO/IEC 30107-3:2017 Information technology -- Biometric presentation attack detection -- Part 3: Testing and reporting [ISOIEC-30107-3]

ISO/IEC 30107-4:2020 Information technology — Biometric presentation attack detection — Part 4: Profile for testing of mobile devices [ISOIEC-30107-4]

ISO/IEC 2382-37:2022(en) Information technology — Vocabulary — Part 37: Biometrics [ISOBiometrics]

2.2. Audience

The intended audience of this document is the Certification Working Group (CWG), Biometric Assurance Subgroup, FIDO Administration, the FIDO Board of Directors, Biometric Authenticator Vendors, Biometric Subsystem Vendors and Test Labs.

The owner of this document is the Biometrics Assurance Subgroup.

2.3. FIDO Roles

- Certification Working Group (CWG)

-

FIDO working group responsible for the approval of policy documents and ongoing maintenance of policy documents once a certification program is launched.

- Biometrics Assurance Subgroup

-

FIDO subgroup of the CWG responsible for defining the Biometric Requirements and Test Procedures to develop the Biometrics Certification program and to act as an SME following the launch of the program.

- Vendor

-

Party seeking certification. Responsible for providing the testing harness to perform both online and offline testing that includes enrollment system (with data capture sensor) and verification software.

- Original Equipment Manufacturer (OEM)

-

Company whose goods are used as components in the products of another company, which then sells the finished items to users.

- Laboratory

-

Party performing testing. Testing will be performed by third-party test laboratories Accredited by FIDO to perform Biometric Certification Testing. See also, FIDO Accredited Biometrics Laboratory.

2.4. FIDO Terms

- FIDO Certified Authenticator

-

An Authenticator that has successfully completed FIDO Certification, and has an valid Certificate.

- FIDO Accredited Biometrics Laboratory

-

Laboratory that has been Accredited by the FIDO Alliance to perform FIDO Biometrics Testing for the Biometrics Certification Program.

- FIDO Member

-

A company or organization that has joined the FIDO Alliance through the Membership process.

2.5. Biometric Data and Evaluation Terms

- Biometric Claim

-

claim that a biometric capture subject is or is not the bodily source of a specified or unspecified biometric reference

Note 1 to entry: A biometric claim can be made by any user of the biometric system.

Note 2 to entry: The phrase "claim of identity" is often used to label this concept.

Note 3 to entry: Claims can be positive, i.e. that the biometric capture subject is enrolled; negative, i.e. that the biometric capture subject is not enrolled; specific, i.e. that the biometric capture subject is or is not enrolled as a specified biometric enrollee; or non-specific, i.e. that the biometric capture subject is or is not among the set or subset of biometric enrollees.

Note 4 to entry: Biometric claims are not necessarily made by the biometric capture subject.

Note 5 to entry: The biometric reference could be on a database, card or distributed throughout a network.

Note 6 to entry: The biometric claim has to fall within the biometric system boundary.

FIDO related note: Notes 1 through 6 above are part of the ISO definition. In the FIDO context, a FIDO authenticator is a personal device. The biometric reference is stored locally on the device. A claim within FIDO is when a person presents themselves to their own device. [ISOBiometrics]

- Capture Attempt

-

interaction of the biometric capture subject with the biometric capture subsystem (37.02.01) with the intent of producing a captured biometric sample

Note 1 to entry: The capture attempt is the interface between the presentation by the biometric capture subject and the action of the biometric capture subsystem.

Note 2 to entry: The “activity” taken can be on the part of the biometric capture subsystem or the biometric capture subject. [ISOBiometrics]

- Capture Transaction

-

one or more capture attempts with the intent of acquiring all of the biometric data from a biometric capture subject necessary to produce either a biometric reference or a biometric probe [ISOBiometrics].

- False Accept Rate (FAR)

-

proportion of biometric (37.01.01)transactions with false biometric claims erroneously accepted [ISOBiometrics]

- False Reject Rate (FRR)

-

proportion of verificationtransactions with true biometric claims erroneously rejected [ISOBiometrics]

- Failure-to-Aquire (FTA)

-

failure to accept for subsequent comparison the biometric sample of the biometric characteristic of interest output from the biometric capture process

Note 1 to entry: Acceptance of the output of a biometric capture process for subsequent comparison will depend on policy.

Note 2 to entry: Possible causes of failure to acquire include failure to capture, failure to extract, poor biometric sample quality, algorithmic deficiencies and biometric characteristics outside the range of the system. [ISOBiometrics]

- Failure-to-Aquire Rate (FTAR)

-

proportion of a specified set of biometric acquisition processes that were failures to acquire

Note 1 to entry: The results of the biometric acquisition processes may be biometric probes or biometric references.

Note 2 to entry: The experimenter specifies which biometric probe (or biometric reference) which acquisitions are in the set as well as the criteria for deeming that a biometric acquisition process has failed.

Note 3 to entry: The proportion is the number of processes that failed divided by the total number of biometric acquisition processes within the specified set. [ISOBiometrics]

- Failure-to-Enrol (FTE)

-

failure to create and store a biometric enrolment data record (37.03.10) for an eligible biometric capture subject (37.07.03) in accordance with a biometric enrolment (37.05.03) policy

Note 1 to entry: Not enrolling someone ineligible to enrol (37.05.08) is not a failure to enrol. [ISOBiometrics]

- Failure-to-Enrol Rate (FTER)

-

proportion of a specified set of biometric enrolment transactions that resulted in a failure to enrol

Note 1 to entry: Basing the denominator on the number of biometric enrolment transactions can result in a higher value than basing it on the number of biometric capture subjects.

Note 2 to entry: If the FTER is to measure solely transactions that fail to complete due to quality of the submitted biometric data, the denominator should not include transactions that fail due to non-biometric reasons (i.e. lack of eligibility due to age or citizenship). [ISOBiometrics]

- Impostor Attack Presentation Accept Rate (IAPAR)

-

proportion of impostor attack presentations using the same PAI species that result in accept. See [ISOIEC-30107-3]. - Biometric Sample

-

analogue or digital representation of biometric characteristics prior to biometric feature extraction

EXAMPLE:A record containing the image of a finger is a biometric sample. [ISOBiometrics]

- Biometric Reference

-

one or more stored biometric samples, biometric templates or biometric models attributed to a biometric data subject and used as the object of biometric comparison

EXAMPLE:Face image stored digitally on a passport; fingerprint minutiae template on a National ID card or Gaussian Mixture Model for speaker recognition, in a database.

Note 1 to entry: A biometric reference may be created with implicit or explicit use of auxiliary data, such as Universal Background Models.

Note 2 to entry: The subject/object labelling in a comparison can be arbitrary. In some comparisons a biometric reference can potentially be used as the subject of the comparison with other biometric references or incoming biometric samples and input to a biometric algorithm for comparison. For example, in a duplicate enrolment check a biometric reference will be used as the subject for comparison against all other biometric references in the database. [ISOBiometrics]

- Target of Evaluation (TOE)

-

The product or system that is the subject of the evaluation. See the TOE section in this document.

- Biometric Presentation

-

interaction of the biometric capture subject and the biometric capture subsystem to obtain a signal from a biometric characteristic

Note 1 to entry: The biometric capture subject is not necessarily aware that a signal from a biometric characteristic is being captured. [ISOBiometrics]

- Verification Attempt

-

biometric claim and capture attempt(s) that together provide the inputs for comparison(s) [ISOBiometrics]

- Verification Transaction

-

one or more verification attempts resulting in resolution of a biometric claim [ISOBiometrics]

- Biometric Mated Comparison Trial

-

comparison of a biometric probe and a biometric reference from the same biometric capture subject and the same biometric characteristic as part of a performance test

Note 1 to entry: Biometric mated comparison trials have historically been referred to as “genuine trials”. However, the term “genuine” historically implied an intent on the part of the biometric capture subject. Ultimately, the trial has nothing to do with the intention of the biometric capture subject. [ISOBiometrics]

- Biometric Non-Mated Comparison Trial

-

comparison (37.05.07) of a biometric probe (37.03.14) and a biometric reference (37.03.16) from different biometric data subjects (37.07.05) as part of a performance test

Note 1 to entry: Biometric non-mated comparison trials have historically been referred to as “impostor trials”. However, they do not accurately model operational system behaviour in the presence of impostors.

Note 2 to entry: A set of biometric non-mated comparison trials need not contain all possible comparisons of biometric probes (37.03.14) and biometric references from different biometric data subjects. [ISOBiometrics]

- Online

-

Pertaining to execution of biometric enrollment or comparison directly following the biometric acquisition process [ISOIEC-19795-1].

- Offline

-

Pertaining to execution of biometric enrollment or comparison of stored biometric data subsequent to and disconnected from the biometric acquisition process

Note 1 to entry: Collecting a corpus of images or signals for offline enrolment and calculation of comparison scores allows greater control over which probe and reference images are to be used in any transaction. [ISOIEC-19795-1].

- Biometric Verification

-

process of confirming a biometric claim (37.06.04) through comparison (37.05.07)

Note 1 to entry: The term “verification”, in the above definition refers to verifying biometrics (37.01.01).

Note 2 to entry: Use of the term “authentication” as a substitute for biometric verification is deprecated. [ISOBiometrics]

- Stored Verification Transaction

-

A set of acquired biometric verification sample(s) from an on-line verification transaction, which is stored for use in off-line verification.

- Presentation attack instrument (PAI)

-

Biometric characteristic or object used in a presentation attack, in [ISOIEC30107-1].

- PAI species

-

Class of presentation attack instruments created using a common production method and based on different biometric characteristics, in [ISOIEC-30107-3].

2.6. Statistical Terms

- Arithmetic Mean

-

The average of a set of numerical values, calculated by adding them together and dividing by the number of terms in the set.

- Variance

-

V. Measure of the spread of a statistical distribution. [ISOIEC-19795-1].

- Confidence Interval

-

A lower estimate L and an upper estimate U for a parameter such as x such that the probability of the true value of x being between L and U is the stated value (e.g. 80%). [ISOIEC-19795-1].

2.7. Personnel Terms

- Test Subject

-

User whose biometric data is intended to be enrolled or compared as part of the evaluation. [ISOIEC-19795-1].

Note: For the purposes of this document, multiple fingers up to four fingers from one individual may be considered as different test subjects. Two eyes from one individual may be considered as different test subjects.

- Test Crew

-

Set of test subjects gathered for an evaluation [ISOIEC-19795-1].

- Target Population

-

Set of users of the application for which performance is being evaluated. See Section 4.3.4 in [ISOIEC-19795-1]. *** Change Test operator to Test organization ***

- Test Organization

-

functional entity under whose auspices the test is conducted [ISOIEC-19795-1].

2.8. Key Words

The key words “MUST”, “MUST NOT”, “REQUIRED”, “SHALL”, “SHALL NOT”, “SHOULD”, “SHOULD NOT”, “RECOMMENDED”, “MAY”, and “OPTIONAL” in this document are to be interpreted as described in [RFC2119].

-

SHALL indicates an absolute requirement, as does MUST.

-

SHALL NOT indicates an absolute prohibition, as does MUST NOT.

-

SHOULD indicates a recommendation.

-

MAY indicates an option.

2.9. Document Structure

This document outlines the Requirements and Test Procedures for the FIDO Biometrics Certification Program.

3. Requirements

This section lists the requirements for achieving FIDO Biometric Component Certification. Unless noted as "Optional", all requirements must be met in order to achieve certification. There are two levels of certification which have different thresholds for IAPAR metric for PAD assessment. Otherwise the testing procedure is the same for both levels.Note: Performance Testing Requirements for FIDO certification are summarized in [ISOIEC-19795-9], Annex A

Note: Presentation Attack Detection Testing Requirements for FIDO certification are summarized in [ISOIEC-30107-4], Annex A

3.1. FIDO Certification Criteria

| BioLevel 1 | BioLevel 1+ | BioLevel 2 | BioLevel 2+ | |

|---|---|---|---|---|

| # Subjects for FAR/FRR | 25 | 245 | 25 | 245 |

| # Subjects for PAD | 15 | 15 | 15 | 15 |

| Lab Tested FAR | 1% | .01% | 1% | .01% |

| Lab Tested FRR | 7% | 5% | 7% | 5% |

| Lab Tested IAPAR (Modality Agnostic Requirements) | 15% | 15% | 7% | 7% |

| # Species A/B | 6/8 | 6/8 | 6/8 | 6/8 |

| # IAPAR Subjects | 15 | 15 | 15 | 15 |

| Documented Self Attestation FAR | Mandatory at <= 1/10000 | Optional at <= 1/10000 | Mandatory at <= 1/10000 | Optional at <= 1/10000 |

| Documented Self Attestation FRR | Mandatory at <= 5% | Optional at <= 5% | Mandatory at <= 5% | Optional at <= 5% |

3.2. Target of Evaluation

The Target of Evaluation (TOE) for the purpose of the FIDO Biometric Certification Program SHALL include all functionality required for biometrics: the Biometric Data Capture, Signal Processing, Comparison, and Decision functionality, whether implemented in hardware or software.

TOE(s) which represent a range of configurations (e.g. different thickness of glass) covered by the Allowed Integration Document SHALL be provided to the FIDO laboratory for testing. The range of configurations to be tested is agreed upon by the FIDO lab and the vendor; and SHALL be approved by the FIDO Biometric Secretariat. The configurations tested SHALL be documented in the FIDO report.

The Allowed Integration Document SHALL be provided for reference to the Laboratory. It SHALL be coherent with the configuration and operation of the Test Harness. While the allowed integration document may follow a different structure, the laboratory shall ensure that the information that is required by the template for the Allowed Integration Document ([BiometricAIDTempate]) is present.

Also, the laboratory shall ensure that the descriptions of the allowed steps for integration and the expected environment of the TOE in the allowed integration document does not contain aspects that may negatively interfere with the functionality of the biometric component. As an example: If a developer would allow to add protective covers over a camera system, the laboratory shall ensure that those covers do not have a negative impact to the functionality of the biometric component. While this analysis can primarily be performed on a theoretical basis, the laboratory shall perform testing if a conclusion cannot be reached by theoretical means.

The environment under which the TOE operates SHALL also be described as part of the Allowed Integration Document. More details are provided in Test Environment.

Relevant product identification which can be referenced by both Biometrics supplier and OEM SHALL also be provided. The test results will be announced for the uniquely identified product.

The TOE SHALL be provided to the Laboratory from the Vendor in the form of a Common Test Harness which is set up to offer practical possibility for the Laboratory to perform the testing efficiently and identify the components of the Test Harness as being of the TOE.

TOEs can utilize the fusion of multiple biometrics. This is often different modalities such as face and voice, but can be performed with different algorithms or sensors for the same modality. Fusion approaches may also include multiple captures of the same modality within a transaction. Other similar approaches could be fall into this category of authenticators which use fusion.

For a TOE which utilizes such a combination of biometrics to authenticate, the vendor SHALL describe what modalities, algorithms, sensors, etc that are utilized for the TOE’s fusion approach. In addition, the vendor SHALL indicate whether (1) the TOE collects all biometrics for each transaction, prior to a decision; OR (2) the TOE collects biometrics in a sequential fashion, i.e, where a decision resulting from initial biometric(s) determine whether subsequent biometric(s) need to be collected. The FIDO certified laboratory will determine the testing needed based on how the TOE operates and this test protocol SHALL be approved by the FIDO Biometric Secretariat.

Details on changes to procedure are provided in MultiBiometric Performance Testing and MultiBiometric Testing for PAD.

Additionally, the test procedure may change if the solution changes as a result of the environment, e.g. visible camera during the day and NIR camera at night. This is discussed in Section Test Environment.

The same requirements apply for fusion with non-biometric information, e.g. pin, geolocation, etc.

3.3. FIDO Biometric Performance Levels

The FIDO Biometric Certification Program uses False Reject Rate (FRR) and False Accept Rate (FAR) to measure Biometric Performance and is further described in the next sections. The FAR and FRR are defined in terms of verification transactions.

Note: Requirements for performance levels for FAR and FRR take into account that vendors who seek to achieve certification through independent testing likely develop their system stricter than the target requirements. This is to ensure that they pass certification, due to the inherent variability that occurs in any test. In other words, if a requirement is set at X%, vendors will target much stricter than X% to ensure that they do not risk not passing due to variability from one test to the next.

3.3.1. Verification Transactions

The Allowed Integration Document provided by the vendor establishes the details of what constitutes a verification transaction (maximum number of verification attempts and timeout period) for a TOE, following the definitions for verification attempt and verification transaction provided in Biometric Data and Evaluation Terms. The end of a verification transaction SHALL be the point at which an accept or reject decision is made by the biometric subsystem. A transaction SHOULD NOT exceed 30 seconds.

Note: Following the definitions from ISO/IEC 2382-37:2017(en) and provided in Biometric Data and Evaluation Terms, a verification attempt results in a biometric comparison while a verification transaction results in a resolution of biometric claim (accept or reject). The ISO definition of a verification transaction is often commonly thought of as a successful attempt that leads to a decision and not a failure to acquire. Vendors SHALL define a verification transaction at the point when the TOE provides a response to the user of accept or reject. This MAY involve one or more attempts. For example, for fingerprint recognition, verification attempts may include a user being asked to place the fingerprint again if the finger is wet, prior to making a decision. In another example, verification attempts may include a biometric system capturing multiple images in series, prior to making a decision.

Note: In the biometric certification testing, we test the biometric subsystem at the verification transaction level. Once a biometric component is integrated into a FIDO authenticator, a user verification decision for the purposes of FIDO authentication may involve multiple biometric verification transactions. For example, a FIDO authenticator may allow five biometric verification transactions and then switch to a fall-back authentication method, e.g., another biometric or a PIN or password.

3.3.2. False Reject Rate (FRR)

Requirement

FRR is measured at the verification transaction level. The requirement at the various levels in given in Section FIDO Certification Criteria .

For BioLevel 1 and BioLevel 1+, the False Reject Rate SHALL meet the requirement of less than 7:100 for the upper bound of a 80% confidence interval. For BioLevel 1 and BioLevel 1+, self-attestation of at least 5% false reject rate is also required and described in SEction Documented Self-Attestation FRR.

For BioLevel 2 and BioLevel 2+, the False Reject Rate SHALL meet the requirement of less than 5:100 for the upper bound of a 80% confidence interval.

The actual achieved FRR SHALL be documented by the laboratory. Requirements on reporting can be found in section § 7.2 FAR/FRR Reporting Requirements Reporting Requirements.

The threshold, or operational point, SHALL be fixed during testing. It is set by the Vendor and SHALL correspond to the claimed False Accept Rate (FAR) value to be tested.

FRR SHALL be estimated by the equation given in [ISOIEC-19795-1], 8.3.2.

The calculation of FRR SHALL be based on:

FRR (%) = (Number of mated transactions for which decision is reject or FTA happens for all attempts ) / (Number of mated transactions conducted) * 100

All errors encountered during the testing, specifically FTA, SHALL be recorded according to [ISOIEC-19795-2], 7.3.

3.3.3. False Accept Rate (FAR)

Requirement

FAR is measured at the transaction level. The requirement at the various levels in given in Section FIDO Certification Criteria .

For BioLevel 1 and BioLevel 1+, the False Accept Rate SHALL meet the requirement of less than 1:100 for the upper bound of a 80% confidence interval. For BioLevel 1 and BioLevel 1+, documented self-attestation of at least 1/10000 false accept rate is also required and described in SEction Documented Self-Attestation FAR.

For BioLevel 2 and BioLevel 2+, the False Accept Rate SHALL meet the requirement of less than 1:10,000 for the upper bound of a 80% confidence interval.

FAR SHALL be estimated as follows (see also [ISOIEC-19795-1], 8.3.3.)

The false accept rate is the expected proportion of non-mated transactions that will be incorrectly accepted. A transaction may consist of one or more non-mated attempts depending on the decision policy.

The false accept rate SHALL be estimated as the proportion (or weighted proportion) of recorded zero-effort impostor transactions that were incorrectly accepted.

Note: Please note that for the weighted proportion of recorded zero-effort impostor transactions the weights will be equal for each user as there will always be 5 impostor transactions per enrolled user.

The false accept rate will depend on the decision policy, the matching decision threshold, and any threshold for sample quality. The false accept rate SHALL be reported with these details, alongside the estimated false reject rate at the same values, (or plotted against the false reject rate at the same threshold(s) in an ROC or DET curve).

FAR is computed through offline testing based on biometric references and stored verification transactions collected during online testing.

The vendor provides an SDK which inputs an biometric reference and a stored verification transaction and which returns the decision to “accept” or “reject”. Each decision used in computing the FAR is based on an inter-person (between person) combinations of a biometric reference and stored verification transaction stored during verification.

The actual achieved FAR SHALL be documented by the laboratory, together with all other information about the test as per [ISOIEC-19795-1] and [ISOIEC-19795-2].

The threshold, or operational point, SHALL be fixed during testing. It is set by the Vendor. The threshold SHALL be the same as the threshold used for FRR.

The maximum number of attempts allowed per verification transaction SHALL be fixed during testing. It is set by the Vendor.

Limitation

For the purposes of this test, the definition of verification attempts and transactions defined in false reject rate on-line testing SHALL be used for each off-line verification transaction.

The calculation of FAR SHALL be based on the following equation:

FAR (%) = (Number of zero-effort imposter transactions for which decision is Accept) / (Number of zero-effort imposter transactions conducted) * 100

A false accept error SHALL be declared if the stored verification transaction results in a match decision. Since FAR is calculated off-line based on previously stored verification transaction, Failure to Acquire SHALL NOT be considered in computation of FAR.

Option

A Vendor MAY at their choice claim lower FAR than the 1:10,000 requirement set by FIDO. The procedures for submitted test data SHALL follow methods described in Documented Self-Attestation FAR (Optional).

Note: The FAR is an error that is related to a non-mated comparison, where the attacker will spend no effort in order to be recognised as a different individual but simply uses their own biometric characteristic. This metric does not provide any information on how the TOE would behave in cases where an attacker mounts a dedicated attack.

3.3.4. Multibiometric Performance Testing

For TOEs that collect all biometric data for fusion prior to a decision, Testing SHALL be performed as written where subjects SHALL provide all modalities as part of authentication. FAR and FRR for the combined decision remain the same.

For TOEs that operate based on sequential fusion (i.e, where a decision resulting from initial biometric(s) determine whether subsequent biometric(s) need to be collected), the laboratory SHALL create a test protocol. If it up to the user which modality goes first, a test plan SHALL be designed based on the information the vendor provides regarding the TOE and approved by the FIDO biometric secretariet. For example, the laboratory may randomly assign the order of the modalities for each transaction. If it up to the TOE which modality goes first, a test plan SHALL be designed based on the information the vendor provides regarding the TOE and approved by the FIDO biometric secretariet. Methods for computing FAR and FRR remain the same and are based on the combined final decision.

For TOE that utilize different fusion approaches depending upon the environment, the laboratory SHALL test each of these algorithms similar to Section Test Environment.

Note: For example, if face modality is always captured first, followed by fingerprint, only if needed, the test plan will consider face first. In another example, if the TOE chooses which modality goes first, the test lab shall consider this in the test plan.

3.3.5. Documented Self-Attestation FAR

Self-attestation for FAR is optional for BioLevel 2 and BioLevel 2+, but required for BioLevel 1 and BioLevel 1+. If the vendor establishes self-attestation for FAR, the following requirement applies. The vendor SHALL attest to an FAR of [1:10,000, or 1:25,000 or 1:50,000 or 1:75,000 or 1:100,000] at an FRR of 5% or less. This claim SHALL be supported by test data as described in Documented Self Attestation (Optional) and documented through a report submitted from the Vendor to the Laboratory. The Laboratory SHALL validate the report follows FIDO requirements described in Documented Self Attestation (Optional) and supports the claim. The laboratory SHALL compare the FAR bootstrap distribution generated as a result of the independent testing and determine if it is consistent with the self-attestation value. The arithmetic mean of the bootstrap distribution SHALL be less than or equal to the self-attestation value. If this is not met, the self-attestation value SHALL NOT be added to the meta-data.

3.3.6. Documented Self-Attestation FRR

Self-attestation for FRR is optional for BioLevel 2 and BioLevel 2+, but required for BioLevel 1 and BioLevel 1+.

If the vendor chooses self-attestation for FRR, the following requirement applies. The vendor SHALL attest to an FRR at no greater than 5% as measured when determining the self-attested FAR. In other words, self attestation for FRR is only possible when self attesting for FAR. This claim SHALL be supported by test data as described in Documented Self-Attestation (Optional) and documented through a report submitted from the Vendor to the Laboratory. The Laboratory SHALL validate the report follows FIDO requirements described in Documented Self-Attestation (Optional) and supports the claim. The laboratory SHALL compare the FRR measured as a result of the independent testing and determine if it is consistent with the self-attestation value. The FRR measurement SHALL be less than or equal to the self-attestation value. If this is not met, the self-attestation value SHALL NOT be added to the meta-data.

3.3.7. Maximum Number of Biometric References from Multiple Fingers

Requirement

If a subject enrolls multiple fingers (e.g. index and thumb) and uses them interchangeably (i.e. one OR another), the FAR increases where the FAR for two fingers enrolled is approximately twice the FAR for one finger enrolled. This section describes the process such that a biometric system can be certified to operate with two or more enrolled fingers. Other biometric modalities where this may apply are described in notes below.

If the analysis below is not performed, the maximum number of biometrics references SHALL default to one.

The vendor SHALL declare the maximum number of different fingers which can be enrolled. The FAR associated with multiple biometric references (FARMT) SHALL be calculated according to the following and SHALL not be greater than 1:10,000.

FARMT =1 - ( (1-FARSA)^B )

B=Max # Biometric References FARSA=Self-attested FAR verified by FIDO according to Section Self-Attestation FAR (Optional).

At the time of FIDO authenticator certification, the maximum number of biometric references which meet the FAR requirement MAY be be stored in the meta-data and SHALL NOT be greater than maximum number verified during biometric certification, according to above. Self-attested FAR in the meta-data SHALL be based on the single biometric reference FAR.

Note: Some iris systems may enroll each eye separately and allow successful verification even if only one eye is presented. Eyes can be considered in place of fingers for this section, if applicable to the TOE.

Note: The same process may be used for other modalities which have a similar property, i.e., where multiple parts of the body can be used interchangeably, e.g. palm veins for right and left hand. The vendor SHALL submit how this property may apply the modality of the TOE. The FIDO lab SHALL use the same process to assess maximum number of biometric references.

3.4. FIDO Presentation Attack Detection Criteria

The requirement for IAPAR takes into account that vendors who seek to achieve certification through independent testing likely develop their system stricther than the target requirements. This is to ensure that they pass certification, due to the inherent variability that occurs in any test. In other words, if a requirement is set at X%, vendors will target much stricter than X% to ensure that they do not risk not passing due to variability from one test to the next.

3.4.1. Impostor Attack Presentation Accept Rate (IAPAR)

Requirement

A TOE with an IAPAR of less than or equal to 15% will meet BioLevel 1 and BioLevel 1+ requirements. A TOE with an IAPAR of less than or equal to 7% will meet BioLevel 2 and BioLevel 2+ requirements.

The threshold for the level and the level achieved is stored in the FIDO Metadata as well as the FIDO Biometric Component Certificate.

Each of the selected six Level A PAI species SHALL achieve an IAPAR of less than the threshold. Each of the selected eight Level B PAI species SHALL achieve an IAPAR of less than the threshold. Levels A and B are defined in Test Procedures for Presentation Attack Detection (PAD)

The actual achieved IAPAR for each PAI species SHALL be documented by the laboratory, together with all other information about the test.

The threshold, or operational point, SHALL be fixed during testing. It is set by the Vendor and SHALL correspond to the claimed False Accept Rate (FAR) value to be tested.

Limitation

The PAI SHALL be presented until the end of the verification transaction (when a decision is made). An accept or match results in an error.

IAPAR (%) = ((Number of Impostor Presentation Attack Transactions for which Decision is Accept) / (Total Number of Impostor Presentation Attack Transactions Conducted)) *100

IAPAR SHALL be calculated for each PAI Species. All errors encountered during the testing SHALL be recorded according to [ISOIEC-19795-2], 7.3.

Note: A verification transaction ends when a decision is made. One or more failures to acquire may occur prior to a decision. The verification transaction (maximum number of verification attempts and timeout period) for a TOE is defined in the Allowed Integration Document as described in Verification Transactions. A failure to acquire for an impostor presentation attack transaction does not count as an error, as some systems may produce a failure to acquire in response to a presentation attack.

Note: ISO/IEC 30107-3:2017 defines the metric as Impostr Attack Presentation Match Rate (IAPMR). A correction is currently pending publication for ISO 30107-3 which changes the name to Impostor Attack Presentation Accept Rate (IAPAR) such that it is consistent with biometric performance metrics.

3.4.2. Rate Limits

Additional requirements in the FIDO Authenticator Security Requirements may impact the biometric TOE under evaluation herein. Those are tested as part of FIDO Authenticator Certification. As part of these requirements, FIDO Authenticators are required to rate-limit user verification attempts according to FIDO Authenticator Security Requirements, Requirement 3.9. For the purposes of biometric certification testing, rate limiting SHOULD be turned off and the test laboratory SHALL limit the number of attempts per transaction to five.

4. Common Test Harness

For each operating point to be evaluated, the Vendor SHALL provide a biometric system component of the FIDO authenticator which has at a minimum:

- Configurable Enrollment system which:

- Selects the operating point(s) to be evaluated.

- Has enrollment hardware / software as will be executed by the FIDO authenticator.

- Includes a biometric data capture sensor and enrollment software.

- Can clear an enrollment.

- Can store an enrollment from acquired biometric sample(s) for use in on-line verification evaluation.

- Can provide enrollment biometric references from acquired biometric sample(s) defined as “user’s store reference measure based on features extracted from enrollment samples” for use in off-line verification evaluation.

- Indicates a failure to enroll ([ISOIEC-19795-1], 4.6.1)

- Configurable Biometric Verification on-line system which:

- Selects the operating point(s) to be evaluated.

- Has verification hardware / software as will be executed by the FIDO authenticator.

- Includes a biometric data capture sensor, a biometric matcher, and a decision module.

- Captures features from an acquired biometric sample to be compared against a biometric reference.

- Makes accept/reject decision at a specific operating point.

- Indicates an on-line failure to acquire ([ISOIEC-19795-1], 4.6.2).

- Indicates an on-line decision(accept or reject).

- Provides the decisive sample(s) of an online verification transaction, i.e., all data used to make the verification transaction decision (this is called a stored verification transaction). This will be used for off-line verification.

- Configurable Biometric Verification off-line software, which:

- Selects the operating point(s) to be evaluated.

- Has verification software as will be executed by the FIDO authenticator.

- Accepts a biometric reference and the stored verification transaction and performs matching in off-line batch mode.

- Provides a decision (accept or reject).

- logging capabilities, which:

- Record every interaction with the TOE.

- Allow the tester to manually addd interactions (e.g. the fact that a tester just cleaned the sensor device)

Note: For Enrollment, some vendors MAY use multiple samples per test subject (e.g. multiple impressions for a single finger). A biometric referene can be based on multiple stored samples. This SHOULD be opaque to the tester.

Note: For a Stored Verification Transaction, the Test Harness SHALL store all attempts in a transaction. This will be used for off-line verification testing.

4.1. Security Guidelines

For security purposes, provided biometric references and verification transactions should be confidentiality and data authentication protected using cryptographic algorithms listed within the FIDO Authenticator Allowed Cryptography List. The lab SHALL report to FIDO the process used to help assure TOE consistency and security.

Note: For example, only the vendor and FIDO Accredited Laboratory should have the ability to decrypt this information. To help assure TOE consistency, the vendor could use different keys to protect/authenticate the data collected from each tested allowed integration. The test result data specific to particular combinations of operating points and integrations could include that configuration information within the authentication.

5. Test Procedures for FAR/FRR

Biometric Performance Testing SHALL be completed by using the Scenario Test approach, an evaluation in which the end-to-end system performance is determined in a prototype or simulated application. See Section 4.4.2 in ([ISOIEC-19795-1]).

Testing shall be perfomed using the Common Test Harness defined in Common Test Harness.

5.1. Test Crew

The Test Crew is the Test Subjects gathered for evaluation.

5.1.1. Number of Subjects

For BioLevel 1 and BioLevel 2 certification, The minimum number of subjects for a test SHALL be 25, based on [ISOIEC-19795-1] and associated analysis in the Statistics and Test Size section of this document.

For BioLevel 1+ and BioLevel 2+ certification, The minimum number of subjects for a test SHALL be 245, based on [ISOIEC-19795-1] and associated analysis in the Statistics and Test Size section of this document.

For fingerprint, up to four different fingers from a single person can be considered as different test subjects. For the fingerprint biometrics, these SHALL be constrained to the index, thumb, or middle fingers, and SHALL be the same as was used for enrollment. A minimum of 123 unique persons SHALL be in the test crew.

Note: Having 123 test subjects will only require the use of 2 fingerprints per test subject at minimum. Allowing 4 fingers per test subject should allow the laboratory to acquire additional data if needed. It also allows to better align the test results of the laboratory with the test results of a potential self attestation.

For eye-based biometrics, both the left and right eye can be considered as two different test subjects. A minimum of 123 unique persons SHALL be in the test crew.

Note: Two eyes cannot be considered as different test subjects if both eyes are enrolled at one time.

In the event there is an enrollment failure according to Enrollment Transaction Failures, an additional Subject SHALL be enrolled for each enrollment failure.

5.1.2. Population

The population SHALL be experienced with the TOE in general and SHALL be given a possibility to try and acquaint themselves with the TOE before starting to enroll and prior to performing verification transactions. The population SHALL be motivated to succeed in their interaction with the TOE and they SHALL perform a large number of interactions with the TOE during a short period of time.

The population SHALL be representative of the target market in relationship to age and gender. Age and gender recommendations are taken from [ISOIEC-19795-5] for access control applications (Section 5.5.1.2 and 5.5.1.3). The following targets SHALL be used for age and gender. Minor deviations from these numbers may be acceptable if agreed by the FIDO biometric secretariat.

5.1.2.1. Age

Age Distribution Requirements Age Distribution < 18 0% 18-30 25-40% 31-50 25-40% 51-70 25-40% > 70 0% Note: for all BioLevels, the population shall still adhere to these age distribution requirements

5.1.2.2. Gender

Gender Distribution Requirements Gender Distribution Male 40-60% Female 40-60% Note: for all BioLevels, the population shall still adhere to these gender distribution requirements

Note: As indicated in [ISOIEC-19795-1], ideally, the test subjects SHOULD be chosen at random from a population that is representative of the people who will use the system in the real application environment. In some cases, however, the test subjects do not accurately represent the real-world users. If the test crew comes from the vendor’s employee population, they MAY differ significantly from the target users in terms of educational level, cultural background, and other factors that can influence the performance with the chosen biometric system.

5.1.3. Statistics and Test Size

The following sections describe the statistical analysis of the data which results from both on-line tests for assessment of FRR and off-line tests for assessment of FAR. Testing will result in a matrix of accepts and rejects for each verification transaction. This data can be used to calculate the upper-bound of the confidence interval through the bootstrapping method described in this section which are used in determining if TOE meets the Requirements set in Section Requirements..

5.1.3.1. Bootstrapping: FAR

Bootstrapping is a method of sampling with replacement for the estimation of the FAR distribution curve. Bootstrap calculations will be conducted according to [ISOIEC-19795-1]), Appendix B.4.2, where v(i) is a specific test subject, where i = 1 to n, where n is the total number of test subjects:

- Sample n test subjects with replacement v(1),...,v(n).

- For each v(i), sample with replacement (n-1) non-self biometric references.

- For each v(i), sample with replacement m transactions made by that test subject.

- This results in one bootstrap sample of the original data (i.e. a new set of data which has been sampled according to 1-3). Intra-person SHALL be avoided if more than one finger or eye is used for each subject.

Please note that the bootstrapping algorithm works on the level of transactions and is agnostic of the individual attempts made in each transaction. As the FAR is the error rate that is tested here, it is not relevant whether a certain transaction comprised 1, 2 or the maximum number of allowed attempts.

A false accept rate is obtained for each bootstrap sample. The steps above are repeated many times. At least 1,000 bootstrap samples SHALL be used, giving a false accept rate (FAR) for each. The distribution of the bootstrap samples for the false accept rate is used to approximate that of the observed false accept rate.

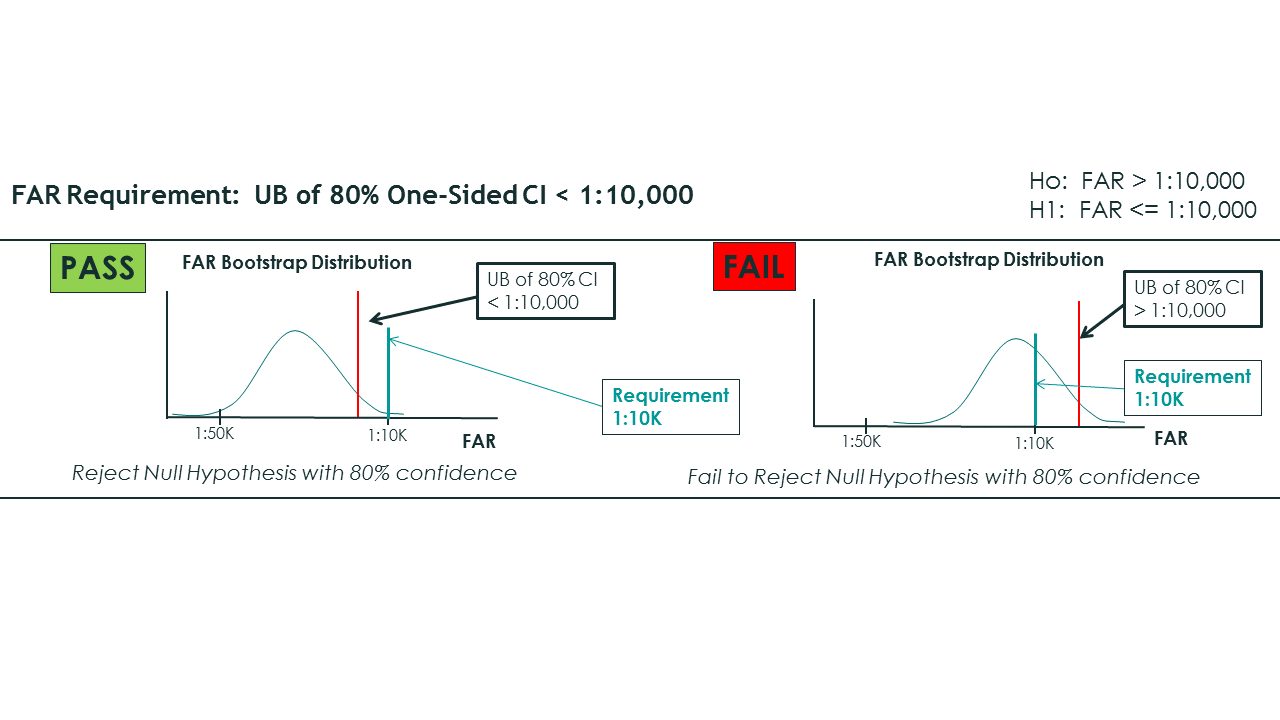

- One-sided upper 100(1-α)% confidence limit is computed from the resulting distribution, where the upper bound is set at 80%

- If the upper limit is below the FAR threshold (e.g. 1:10,000), there is reasonable confidence that the standard is met.

Note: Simulations of the bootstrapping process were performed using settings required by FIDO in order to determine the mean FAR associated with Upper Bound of the Confidence interval. The following settings were used: 245 Subjects (n), 1 enrollment per subject, 5 verification transactions(m), 298,900 total impostor comparisons from N = nm(n−1), Errors were randomly distributed across the 298,900 comparisons, 1000 bootstraps created using ISO method. (Unlike this simulation, in the results from a laboratory test, it is possible to have fewer than 298,900 comparisons, as some transactions may result in a FTA.)

Note: (continued) When the Upper Bound (UB) of the Confidence Interval of the bootstrap distribution is set to 1:10,000, the mean FAR is necessarily below 1:10,000. Table below provides the mean FAR associated with 68%, 80%, and 95 UB Confidence Intervals when the UB is set to 1:10,000. For example to achieve an 80% upper bound, in this simulation, the mean FAR is 1:13,000.

Table: Mean of Bootstrapping Distribution Associated Different Upper Bounds of Confidence Interval set to 1:10,000 Upper Bound (UB) of Confidence Interval Set to 1:10,000 Number of Errors to Achieve UB Mean of FAR Bootstrap Distribution Associated with UB 68% 27 (out of 298,900) 1/11,000 80% 23 (out of 298,900) 1/13,000 95% 17 (out of 298,900) 1/18,000 Figure 1 provides a schematic of the bootstrap distribution and FAR requirement. A biometric sub-system passes biometric certification if the upper bound of the 80% one-sided confidence interval derived from the bootstrap distribution is less than 1:10,000.

Bootstrapping FAR Schematic 5.1.3.2. Bootstrapping: FRR

Bootstrapping is a method of sampling with replacement for the estimation of the FRR distribution curve. Bootstrap calculations will be conducted according to [ISOIEC-19795-1], Appendix B.4.2, where v(i) is a specific test subject, where i = 1 to n, where n is the total number of test subjects:

- Sample n test subjects with replacement v(1),...,v(n).

- For each v(i), sample with replacement m transactions made by that test subject.

- This results in one bootstrap sample of the original data (i.e. a new set of data which has been sampled according to 1-3).

A false reject rate is obtained for each bootstrap sample. The steps above are repeated many times. At least 1,000 bootstrap samples SHALL be used, giving a false reject rate (FRR) for each. The distribution of the bootstrap values for the false reject rate is used to approximate that of the observed false reject rate.

- One-sided upper 100(1-α)% confidence limit is computed from the resulting distribution, where the upper bound is set at 80%

- If the upper limit is below the FRR threshold (e.g. 3 in 100), there is reasonable confidence that the standard is met.

5.1.3.3. Rule of 3: FAR

In the event that there are zero errors in the set of non-mated comparisons, the TOE meets the FAR requirement on the basis of the "Rule of 3".

Note: The "Rule of 3" method is utilized to establish an upper bound if there are zero errors in the test, according to [ISOIEC-19795-1], Appendix B.1.1. As long as the laboratory utilizes at least n=245 subjects, this results in n(n-1)/2 or 29890 combinations (N). Rule of 3 states the upper bound of the 95% confidence interval is 3/N, or 0.0100%. For an 80% upper bound, the upper bound is 1.61/N or 0.00535%, which meets the FIDO FAR requirement of 0.01%. The following table provides number of subjects needed to meet Rule of 3 for lower FAR and when two (a=2) instances (fingers or eyes) are used.

Note: for BioLevel 1 and BioLevel 2, we have 25 subjects, 300 combinations, and 1.61/300 leads to .536% FAR (<1%)

Table: Rule of 3 for FAR Rule of 3 ([ISOIEC-19795-1]) FAR 0.0100% 0.0040% 0.0020% 0.0013% 0.0010% 1:10,000 1:25,000 1:50,000 1:75,000 1:100,000 One unique sample per person (e.g., one finger or one eye) # of people needed (n) 245 390 550 675 775 # Combinations-C = n(n-1)/2 29890 75855 150975 227475 299925 Claimed error = 3/C (when zero errors in C combinations) 0.0100% 0.0040% 0.0020% 0.0013% 0.0010% Two unique sample per person (e.g., two fingers or two eyes) # people needed (n) 123 195 275 335 388 # unique samples (a) 2 2 2 2 2 # Combinations-C = (a2)*n*(n-1)/2 30012 75660 150700 223780 300312 Claimed error = 3/C (when zero errors in C combinations) 0.0100% 0.0040% 0.0020% 0.0013% 0.0010% 5.1.3.4. Rule of 3: FRR

In the event that there are zero errors in the set of geniune comparisons, the TOE meets the FRR requirement on the basis of the Rule of 3.

Note: The "Rule of 3" method can be utilized to establish an upper bound if there are zero errors in the test, according to [ISOIEC-19795-1], Appendix B.1.1. As long as the laboratory utilizes at least 245 people, this results in 245 mated comparisons. Rule of 3 states the upper bound of the 95% confidence interval is 3/N (3/245) or 1.22%. For an 80% upper bound, the upper bound is 1.61/N or 0.65%, which meets the FIDO FRR requirement of 5%.

Note: for BioLevel 1 and BioLevel 2, we have 25 subjects, 25 genuine comparisons, and 1.61/25 leads to 6.4% FRR (<7%)

5.1.4. Test Visits

As this test is focused on False Accept Rate, collection from test subjects MAY occur in one visit.

5.1.5. Test Environment

In this context it should be noted that the definition of the testing environment of the TOE (which is based on the environment of the TOE described in the Allowed Integration Document) plays an important role in the context of the certification. For this reason, potential environment(s) shall also be described in the Allowed Integration Document. Every certificate will identify the testing environment that the biometric component has been tested under.The definition of the environment may also have an impact on the testing activities. Testing shall always be carried out under consideration of the intended environment. The test requirements in this document allow for a certain variation in environmental conditions. However, such variations have their limits. This could lead into a situation where the laboratory shall perform a test multiple times or with a larger amount of test subjects if a TOE has a very diverse definition of environments.

The question of whether a specific intended environment will lead to additional requirements for testing has to be seen in the context of a specific Target of Evaluation and shall be discussed with the FIDO biometric secretariat during the review of the test plan. Environments that may lead to increased FRR (i.e. more inconvenience for the user) will not necessarily be evaluated as part of the testing plan. However, a testing plan may include multiple environments for cases where the TOE may have multiple configurations to address multiple environments.

For example, this could include (1) a different operating point (e.g. threshold for the matcher) for a noisy environment or (2) a NIR-only face recognition in low/no light ( and visible light is used in normal light). If a TOE has multiple configurations that address different environments, then the TOE SHALL be tested for each configuration and the test plan SHALL incorporate the variations for the different environments that result in a different configuration.

5.1.6. Biometric Reference Adaptation

Some systems perform biometric reference updates, that is, the biometric reference is adapted after successful verification transactions.

Vendor SHALL inform the Laboratory whether biometric reference adaptation is employed and SHALL give instructions on what number of correct matches SHOULD be performed in order to have the TOE adequately trained before the testing. For the purposes of testing, the Biometric Reference Adaptation SHALL be turned off, after the TOE has been fully trained on correct biometric references.

Note: Biometric reference adaptation which requires an extensive amount of time may incur increased cost of the laboratory test.

The offline software SHALL utilize biometric references in the same way as the online software.

5.1.7. Enrollment

Enrollment procedures SHALL be provided in writing to the Laboratory by the Vendor. These procedures SHALL be followed by the Test Crew. Instructions MAY be provided in any form, including interactive on screen guidance to the Test Subject. The Administrator SHALL record any FTE, if appropriate, with any divergence from enrollment instructions that MAY have caused the failure.

5.2. Test Methods

Testing will be performed through a combination of Online and Offline Testing ([ISOIEC-19795-1]).5.2.1. Pre-Testing Activities

Pre-test activities SHALL be performed according to [ISOIEC-19795-2]:-

Section 6.1.8 Pre-test procedures

-

Section 6.1.8.1 Installation and validation of correct operation

5.2.2. Online Testing

This section will focus on Online Testing.

To facilitate estimation of false accept rate, all biometric references and all stored verification transactions are stored to allow for offline computation of the FAR.

5.2.2.1. Online: Enrollment

Enrollment SHALL be performed according to [ISOIEC-19795-1], 7.3.

5.2.2.1.1. Pre-Enrollment

Before enrollment test subjects MAY perform practice transactions.

5.2.2.1.2. Enrollment Transactions

Enrollment transactions SHALL be conducted without test operator guidance with the exception that the test operator may instruct the user to perform 5 mated transactions. Additionally, the operator is allowed to provide guidance as far as it concerns the test situation. Any kind of guidance SHALL be provided by the biometric authentication system/capture sensor in a similar way to the final application.

The enrollment process will be different depending on the biometric authentication system. This process MAY allow enrollment after one attempt, or MAY require multiple presentations and attempts. For testing, this process SHALL be similar to the final application.

5.2.2.1.3. Enrollment Transaction Failures

A failure to enroll SHALL be declared when the biometric authentication system is not able to generate a biometric reference for the test subjects after executing three enrollment transactions.

5.2.2.2. Online: Mated Verification Transaction

Mated verification transactions SHALL be performed according to [ISOIEC-19795-1], 7.4. This means that the following requirements SHALL be met:

Mated transaction data shall be collected in an environment, including noise, that closely approximates the target application. This test environment shall be consistent throughout the collection process. The motivation of test subjects, and their level of training and familiarity with the system, should also mirror that of the target application.

The collection process should ensure that presentation and channel effects are either uniform across all users or randomly varying across users. If the effects are held uniform across users, then the same presentation and channel controls in place during enrolment should be in place for the collection of the test data. Systematic variation of presentation and channel effects between enrolment and test data will lead to results distorted by these factors. If the presentation and channel effects are allowed to vary randomly across test subjects, there shall be no correlation in these effects between enrolment and test sessions across all users.

In the ideal case, between enrolment and the collection of test data, test subjects should use the system with the same frequency as the target application. However, this may not be a cost-effective use of the test crew. It may be better to forego any interim use, but allow re-familiarization attempts immediately prior to test data collection.

For systems that may adapt the biometric reference after successful verification, some interim use between enrolment and collection of ated attempt and transaction data may be appropriate. The amount of such use should be determined prior to data collection, and should be reported with results.

The sampling plan shall ensure that the data collected are not dominated by a small group of excessively frequent, but unrepresentative users.

Great care shall be taken to prevent data entry errors and to document any unusual circumstances surrounding the collection. Keystroke entry on the part of both test subjects and test administrators should be minimized. Data could be corrupted by impostors or genuine users who intentionally misuse the system. Every effort shall be made by test personnel to discourage these activities; however, data shall not be removed from the corpus unless external validation of the misuse of the system is available.

Users are sometimes unable to give a usable sample to the system as determined by either the test administrator or the quality control module. Test personnel should record information on failure-to-acquire attempts where these would otherwise not be logged. The failure-to-acquire rate measures the proportion of such attempts, and is quality threshold dependent. As with enrolment, quality thresholds should be set in accordance with vendor advice.

Test data shall be added to the corpus regardless of whether or not it matches a biometric reference. Some vendor software does not record a measure from an enrolled user unless it matches the biometric reference. Data collection under such conditions would be severely biased in the direction of underestimating false non-match error rates. If this is the case, non-match errors shall be recorded by hand. Data shall be excluded only for predetermined causes independent of comparison scores.

All attempts, including failures-to-acquire, shall be recorded. In addition to recording the raw image data if practical, details shall be kept of the quality measures for each sample if available and, in the case of online testing, the matching score or scores.

Note: Details for FIDO as they relate to the ISO requirements are discussed in the following sections.

5.2.2.2.1. Pre-Verification

Before genuine verification transactions test subjects MAY perform practice transactions.

5.2.2.2.2. Mated Verification Transaction

Test Subjects SHALL conduct 5 genuine verification transactions. Mated verification transactions SHALL be conducted without test operator guidance. Any kind of guidance SHALL be provided by the biometric authentication system / capture sensor in a similar manner to the final application.

The verification process MAY be different depending on the biometric authentication system. This process MAY require multiple presentations. The verification transaction (maximum number of verification attempts and timeout period) for a TOE is defined in the Allowed Integration Document as described in Verification Transactions. A transaction SHOULD NOT exceed 30 seconds.

The authenticator vendor SHALL describe to the Accredited Biometric Laboratory what constitutes the start and end of a verification transaction.

The test harness SHALL provide the decisive sample(s) of a transaction for off-line testing, i.e., all data used to make the verification transaction decision.

5.2.2.2.3. Mated Verification Errors

A failure to acquire SHALL be declared when the biometric authentication system is not able to capture and / or generate biometric features during a verification attempt (an FTA MAY happen per attempt). The on-line verification test harness SHALL indicate to the laboratory when a failure to acquire has occurred.

Note: A failure to acquire will not be considered during off-line FAR testing.

A false rejection error SHALL be declared when the biometric authentication fails to authenticate the test subjects after executing the complete verification transaction.

The manner in which the laboratory records failure to acquire, false rejects, and true accepts are left to the laboratory, but SHALL be done automatically to avoid introducing human error.

5.2.2.2.4. FRR

False reject rate SHALL be calculated according to requirements in FRR and statistical analysis in Statistics and Test Size.

5.2.3. Offline Testing

Offline testing measures FAR and leverages all possible combinations between test subjects.

5.2.3.1. Offline: Software Validation

As the evaluation procedure might utilize online testing for the evaluation of false reject rate and offline testing for the evaluation of false accept rates, it is important for the evaluation laboratory to assure that the offline biometric functionality is a functionally equivalent to the online biometric functionality. The evaluation laboratory SHALL perform a series of genuine verification transaction tests online and offline and make sure that the results are the same.

The on-line verification testing SHALL result in a sequence of stored verification transactions and decisions for every transaction that did not have a failure to acquire. The off-line verification testing SHALL run these stored verification transactions in the same order and SHALL result in the exact same sequence of decisions. The complete sequences SHALL be compared by the laboratory to ensure their identity.

5.2.3.2. Offline: Non-mated Verification Transactions

5.2.3.2.1. Pre-Verification

To facilitate estimation of false accept rate, all enrollment transactions and verification transactions are stored to allow for offline computation of the FAR.5.2.3.2.2. Non-mated Verification Transaction

The verification offline module provided by the vendor is used to compute all impostor (between person) combinations for estimating FAR.

Non-mated Verification transactions SHALL be performed according to [ISOIEC-19795-1], 7.6.1.1b, 7.6.1.2b, 7.6.1.3, 7.6.1.4, and 7.6.3.1. Different fingers or irises from the same person SHALL NOT be compared according to [ISOIEC-19795-1] 7.6.1.3.

5.2.3.2.3. Non-mated Verification Transaction Failures

The impostor verification process compares a biometric reference and a stored verification transaction from different persons.

For fingerprint, up to four different fingers from a single person can be considered as different test subjects. For eye-based biometrics, both the left and right eye can be considered as two different test subjects. However, impostor scores between two fingerprints or two irises from a single person SHALL be excluded from computation of the FAR.

A false accept error SHALL be declared if the stored verification transaction results in a match decision.

Note: It is not possible to obtain an FTA rate for FAR Offline Testing. FTAs are not considered in Offline Testing.

5.2.3.2.4. FAR

False accept rate SHALL be calculated according to requirements in FAR and statistical analysis in Statistics and Test Size.

5.3. Documented Self-Attestation (Optional)

5.3.1. Procedures for Documented Self-Attestation and FIDO Accredited Biometrics Laboratory Confirmation using Independent Data (Optional)

The previous sections are a description of certification by FIDO Accredited Biometrics Laboratory. The independent testing focuses on a maximum FAR level where the upper bound of the confidence interval for FAR MUST be less than 1:10,000 and FRR is [3:100]. Biometrics and platform vendors MAY choose to demonstrate a lower FAR: e.g. FAR @ 1:100,000 at a FRR of less than [3:100]. This section describes the processes for optional self-attestation for lower FAR of 1:X, e.g. 1:50,000 at the vendor’s discretion utilizing biometric data to which they have access.

Self-attestation is optional. If the vendor chooses self-attestation, the following requirements apply. The vendor SHALL follow all procedures that were described in the Test Procedures with the following definitions and exceptions:

-

The Vendor SHALL attest to an FAR of [1:25,000, 1:50,000, 1:75,000, or 1:100,000, or others?] at an FRR of less than [3:100].

-

The Vendor SHALL attest that the biometric system used for self-attestation is the same system functioning at the same operating point as the test harness submitted FIDO independent testing.

-

The number of subjects* SHALL follow the following table:

Documented Self-Attestation Number of Subjects 1:25,000 1:50,000 1:75,000 1:100,000 Number of Subjects* 390 550 675 775 *Up to four different fingers or two irises from a person MAY be used as different subjects.

-

To document that they followed the procedures the vendor SHALL provide a report which includes the information in § 7 Test Reporting Report to the Vendor.

In addition, the laboratory SHALL compare the FAR bootstrap distribution generated as a result of the independent testing and determine if it is consistent with the self-attestation value. The mean of the bootstrap distribution SHALL be less than or equal to the self-attestation value.

6. Test Procedures for Presentation Attack Detection (PAD)

This section provides testing plan for Presentation Attack (Spoof) Detection. It focuses on presentation attacks which require minimal expertise. The testing SHALL be performed by the FIDO-accredited independent testing laboratory on the TOE provided by vendor. The evaluation measures the Impostor Attack Presentation Accept Rate (IAPAR), as defined in ISO 30107 Part 3.

PAD Testing shall be completed by using the following approach.

6.1. Test Crew

The Test Crew is the Test Subjects gathered for evaluation.

6.1.1. Number of Subjects

Number of subjects for a test SHALL be 15.

For fingerprints, PAD testing SHALL be constrained to the index, thumb, or middle fingers of the test subject.

The same test subjects as used for FRR testing may be used for PAD testing.

In the event there is an enrollment failure according to Enrollment Transaction Failures, an additional Subject SHALL be enrolled for each enrollment failure.

6.1.2. Population

The population SHALL be representative of the target market in relationship to age and gender. Age and gender recommendations are taken from [ISOIEC-19795-5] for access control applications (Section 5.5.1.2 and 5.5.1.3). The following targets SHALL be used for age and gender. Minor deviations from these numbers may be acceptable if agreed by the FIDO biometric secretariat.

6.1.2.1. Age

Age Distribution Requirements Age Distribution <18 0% 18-30 25-40% 31-50 25-40% 51-70 25-40% > 70 0% 6.1.2.2. Gender

Gender Distribution Requirements Gender Distribution Male 40-60% Female 40-60% Note: As indicated in [ISOIEC-19795-1], ideally, the test subjects SHOULD be chosen at random from a population that is representative of the people who will use the system in the real application environment. In some cases, however, the test subjects do not accurately represent the real-world users. If the test crew comes from the vendor’s employee population, they MAY differ significantly from the target users in terms of educational level, cultural background, and other factors that can influence the performance with the chosen biometric system.

6.1.3. Test Visits

Collection from test subjects MAY occur in one visit.

6.1.4. Enrollment

Enrollment procedures SHALL be provided in writing to the Laboratory by the Vendor. These procedures SHALL be followed by the Test Crew. Instructions MAY be provided in any form, including interactive on screen guidance to the Test Subject. The Administrator SHALL record any FTE, if appropriate, with any divergence from enrollment instructions that MAY have caused the failure.

6.2. Test Methods

Testing will be performed through Online Testing using the Common Test Harness defined in Common Test Harness (Optional).

6.2.1. Pre-Testing Activities

Pre-test activities SHALL be performed according to [ISOIEC-19795-2]:

-

Section 6.1.8 Pre-test procedures

-

Section 6.1.8.1 Installation and validation of correct operation

6.2.2. Testing for PAD

This section will focus on PAD Testing.

6.2.3. Enrollment

Each subject SHALL be enrolled. Enrollment SHALL be performed according to ISO/IEC 19795-1, 7.3. Presentation attacks will be performed against this enrollment. Similar to FRR/FAR testing, enrollment transactions will be performed without operator guidance and is flexible with regard to the vendor in that in may allow multiple presentation for the enrollment.

The test lab SHALL also collect biometric characteristic data required for creating a Presentation Attack Instrument. For example, for fingerprint, a copy of the enrolled person’s fingerprint is needed and can be acquired via collection of a fingerprint image on a second fingerprint scanner or by leaving a latent print. The method used to acquire the biometric characteristic SHALL be consistent with the recipe of each Presentation Attack Instrument Species to be tested.

6.2.4. PAI Species

A Presentation Attack Instrument (PAI) is the device used when mounting a presentation attack. A PAI species is a set of PAIs which use the same production method, but only differ in the underlying biometric characteristic. Table 1 is a high level description of presentation attacks by level. The next section provides additional detail of PAI species for each modality.

The laboratory SHALL select PAI species appropriate for biometric modality of the TOE.

PAI Species are described at a high level for fingerprint, face, iris/eye, and voice. If the TOE is a different biometric modality than these, the vendor SHALL propose to a set of PAI species for Levels A and B for FIDO’s approval. Upon FIDO approval, the test laboratory can proceed with PAD evaluation.